A lot of current discussion about AI agent safety treats "what could the agent do on its own" as the central question. I think this framing is backwards, and once you fix it, a surprising amount of the hand-wringing dissolves into a problem we already know how to solve: identity, delegation, and capability propagation.

Agents don't need identities. Systems need three things: principals, constraints, and receipts. Anchor every action to a human principal. Enforce constraints at the execution layer. Record reasoning alongside outcomes. Do those, and agent safety becomes a tractable systems problem rather than a metaphysical one.

The Core Claim

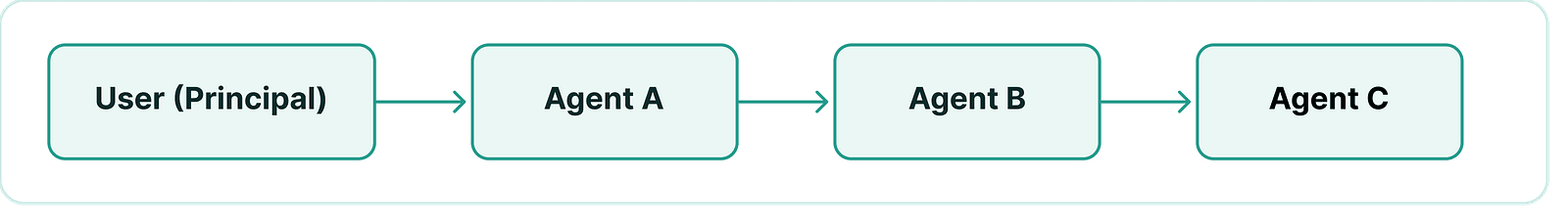

An agent should not have a free-standing identity in the security sense. The identity that matters—where authorization, accountability, and audit hang from—is the identity of the human who initiated the action. That principal should propagate through every downstream agent call. If agent A invokes agent B, B is acting on behalf of the original human, not on behalf of A and not on its own behalf.

Every action in the chain remains attributable to the original principal.

When this is done properly, the worry that "an agent might do something it shouldn't" becomes much less interesting. An agent can only do what its triggering principal is authorized to do, attenuated by whatever guardrails are baked into the call path. There is no autonomous "wants" for the agent to exercise. It executes within a delegated capability envelope or it does nothing.

This is not a new idea. Capability-based security, OAuth delegation, and SPIFFE-style workload identity have all been moving in this direction for years. What's new is the substrate.

Propagation, Not Impersonation

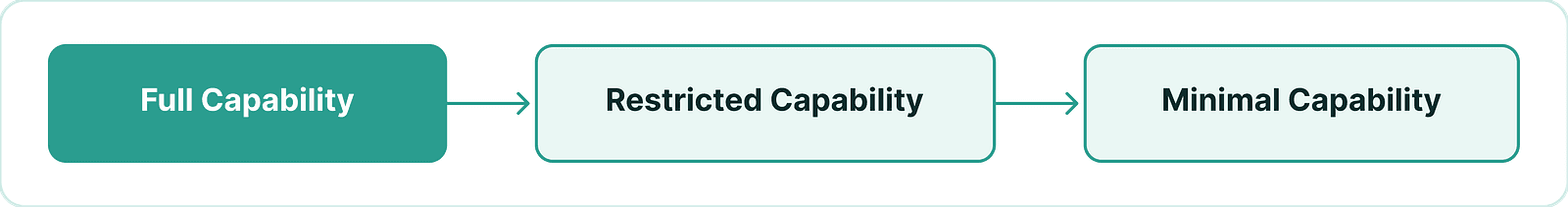

The right way to think about call chains is propagation with attenuation. The user U invokes agent A. A invokes agent B. B invokes agent C. At every hop, U remains the originating principal. Each contract along the way enforces its own policy, which can only narrow what U was originally authorized to do, never broaden it.

Authority narrows at each step, forming a funnel of decreasing power.

The audit record captures the full chain: U → A → B → C, with every hop attributable. This eliminates an entire class of ambiguity that plagues service-account architectures, where actions taken by background services are attributed to the service rather than the human who set them in motion, and accountability fragments accordingly.

Capability minimization at each hop also converts the question "did this agent decide correctly?" into the much weaker question "is this agent's worst possible decision still acceptable?"—which is far easier to reason about.

Contracts, Not Tokens

Traditional systems rely on tokens that describe permissions. A token says what a principal is permitted to do; it depends on the receiving system to honor it. Contract-based systems enforce what can actually happen. Constraints like rate limits, allow-lists, withdrawal caps, and multi-sig thresholds are embedded directly into execution logic, making them non-bypassable.

On WeilChain, every agent is a smart contract. That gives the agent a contract address, which serves as a structural identifier useful for audit and revocation, derived from deployment rather than asserted by the agent itself. The principal driving any action remains the human who initiated the call chain.

A smart-contract agent gives you three things at once that traditional architectures have to assemble piecemeal:

- A deterministic, verifiable identifier.

- Deterministic code that can be inspected before invocation.

- Policy baked directly into that code—so constraints aren't merely declared in a token the agent is asked to honor, but enforced at the execution layer the agent cannot bypass.

This is meaningfully stronger than token-scoped authorization. A token says what a principal is permitted to do; a contract says what can actually happen. The Cerebrum SDK on WeilChain takes this further by making the agent-as-contract pattern the default unit of construction.

Long-Running Workflows Stop Being a Problem

One of the perennial pain points in agentic systems is long-running workflows—jobs that span hours, days, or longer, often crossing system boundaries. In conventional architectures these force credential re-issuance, service accounts, and the gradual erosion of identity over time. Who really authorized this thing that's been running for a week?

In the principal-propagation model the concern dissolves. The principal is carried through the workflow regardless of time or system boundaries. Identity, attribution, and control are preserved without service accounts standing in for absent humans. A workflow that runs for a month is still attributable to the human who launched it, bounded by the same capability envelope they granted on day one.

The Decision-Integrity Problem

Even with clean identity propagation and capability-bounded contracts, one question remains. Deterministic contract execution audits the call. It does not, by itself, audit the decision to make the call. If an agent's logic includes any input-dependent branching, and the input comes from outside the trust boundary, then a manipulated input can produce an authorization-valid but unintended action. This is where most real-world failures will happen.

This isn't a problem specific to LLM-driven agents. It applies to any agent whose behavior is input-dependent. But it is the problem that the loudest recent panic about AI agents is really pointing at, even when it's framed clumsily as "the agent might go rogue."

Receipts as the Answer

Every action should produce a receipt capturing what went in, what was thought, what was called, what came out, and what changed.

WeilChain's implementation logs the entire LLM response on chain alongside the action it triggers—reasoning trace, tool calls, all of it. The reasoning becomes part of the audit record, not just the call. You can reconstruct what the agent was thinking when it acted. You can build downstream machinery on top: dispute resolution, insurance, statistical detection of injection patterns, slashing of agents whose recorded reasoning shows clear deviation from their declared policy. Most off-chain systems lose all of this the moment inference completes.

It's worth being precise about what this does and doesn't solve. It converts decision-integrity from an invisible property into an auditable one. It makes misbehavior durably attributable in a way that civil and criminal accountability systems already work with. The two residual gaps—that a model's reasoning trace is its self-report rather than its actual computation, and that logging is forensic rather than preventive—are narrower and more tractable than the problem we started with. Closing them further leads naturally toward verifiable inference substrates: TEE attestation, zkML, and similar techniques that move trust from the model's self-report to hardware or mathematics.

Where This Leaves Us

The standard worry that "agents need to be controlled because we don't know what they'll do" rests on a model where the agent has standalone authority and ambiguous accountability. Take both of those away—make the principal a human, propagate that principal through every hop, bake the constraint envelope into the contract layer, and log the reasoning that drove each decision—and the worry shrinks substantially. What's left is a forensic accountability system that's strictly stronger than what most production environments run today, and a residual decision-integrity problem that's now visible enough to attack with verifiable inference techniques.

Agents don't need their own identity to be safe. They need to be incapable of acting outside the chain of human delegation, transparent about what they did and why, and bounded by enforcement that lives below them rather than above them. Smart-contract agents on a chain that logs reasoning give you all three.

Agent safety is not about controlling intelligence. It's about structuring systems correctly. Principal-based identity, contract-level enforcement, and receipt-based auditability together produce verifiable, governable, production-ready agentic systems.

At Weilliptic, this model is not theoretical. If you're building or deploying AI agents in production, the question isn't whether they can act. It's whether you can prove—with certainty—who authorized those actions, what constraints were enforced, and what actually happened.